Introduction

I make complex platforms legible by turning ambiguous systems into trusted workflows that teams can understand, review, and ship with confidence.

Snapshot

From Platform Complexity to Org Leverage

Leadership Thesis

The shortest version of my work is this: I help teams make complex platforms legible enough for users to trust and structured enough for teams to ship. That usually starts with the same three moves: understand the real workflow, make the risky decisions visible, and turn the best decisions into reusable patterns.

Across infrastructure, collaboration software, and AI-assisted data products, I have learned that product clarity and operating clarity rise and fall together. When users cannot see state, intent, or next steps, teams usually feel the same ambiguity in planning, handoff, and delivery. The work that follows is about fixing both sides of that problem at once.

Leadership Narrative

Career Throughline at Platform Scale

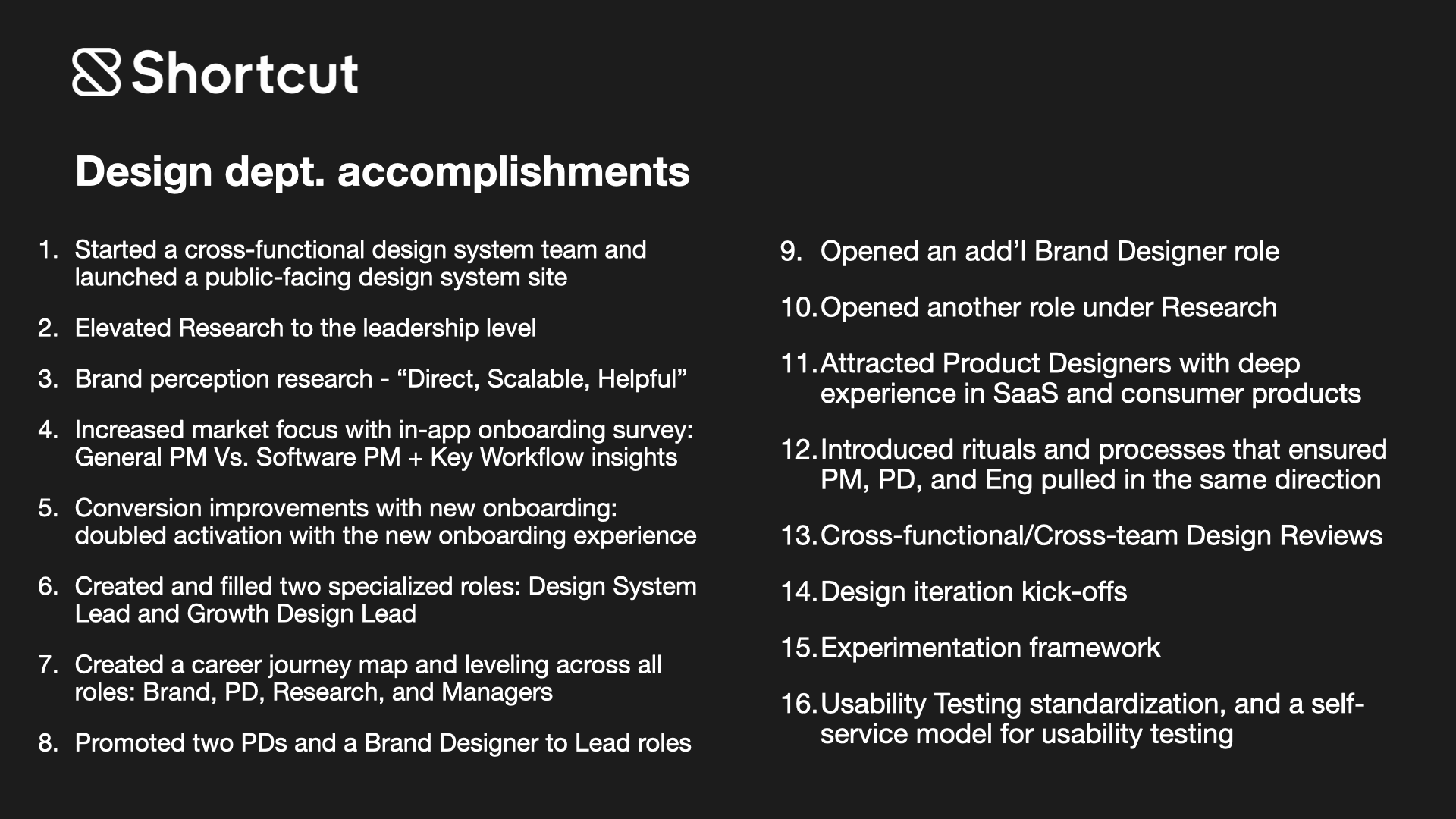

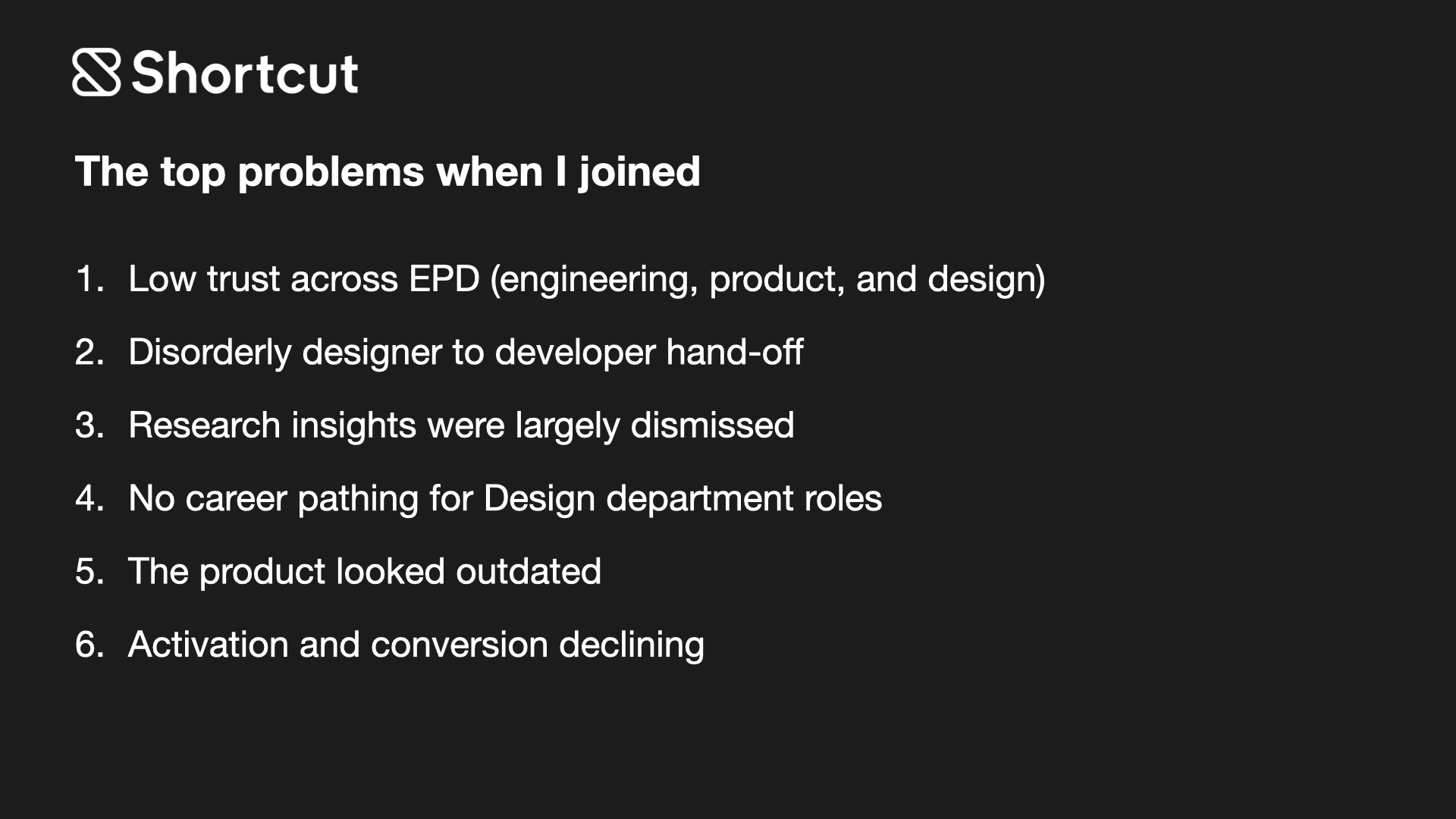

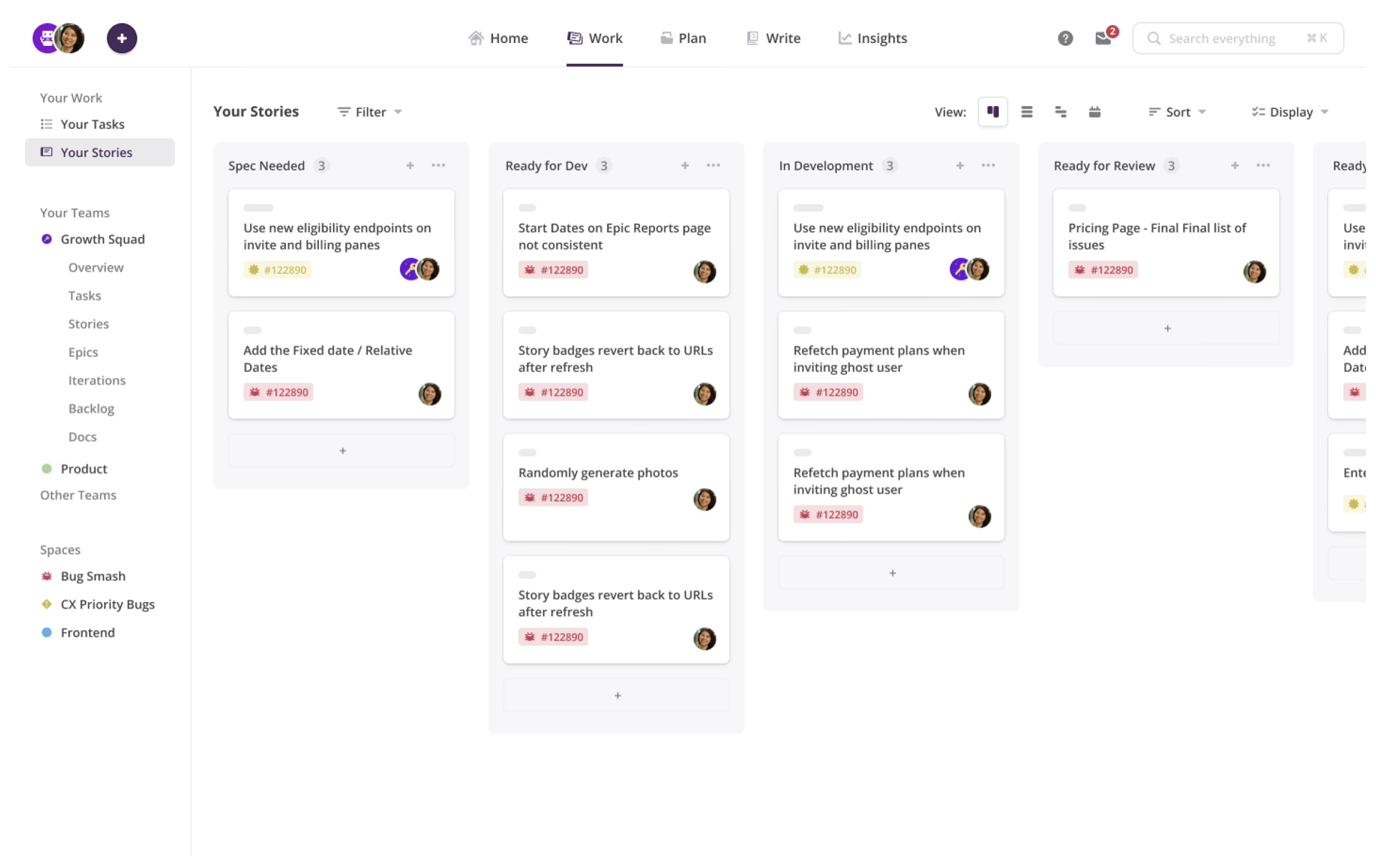

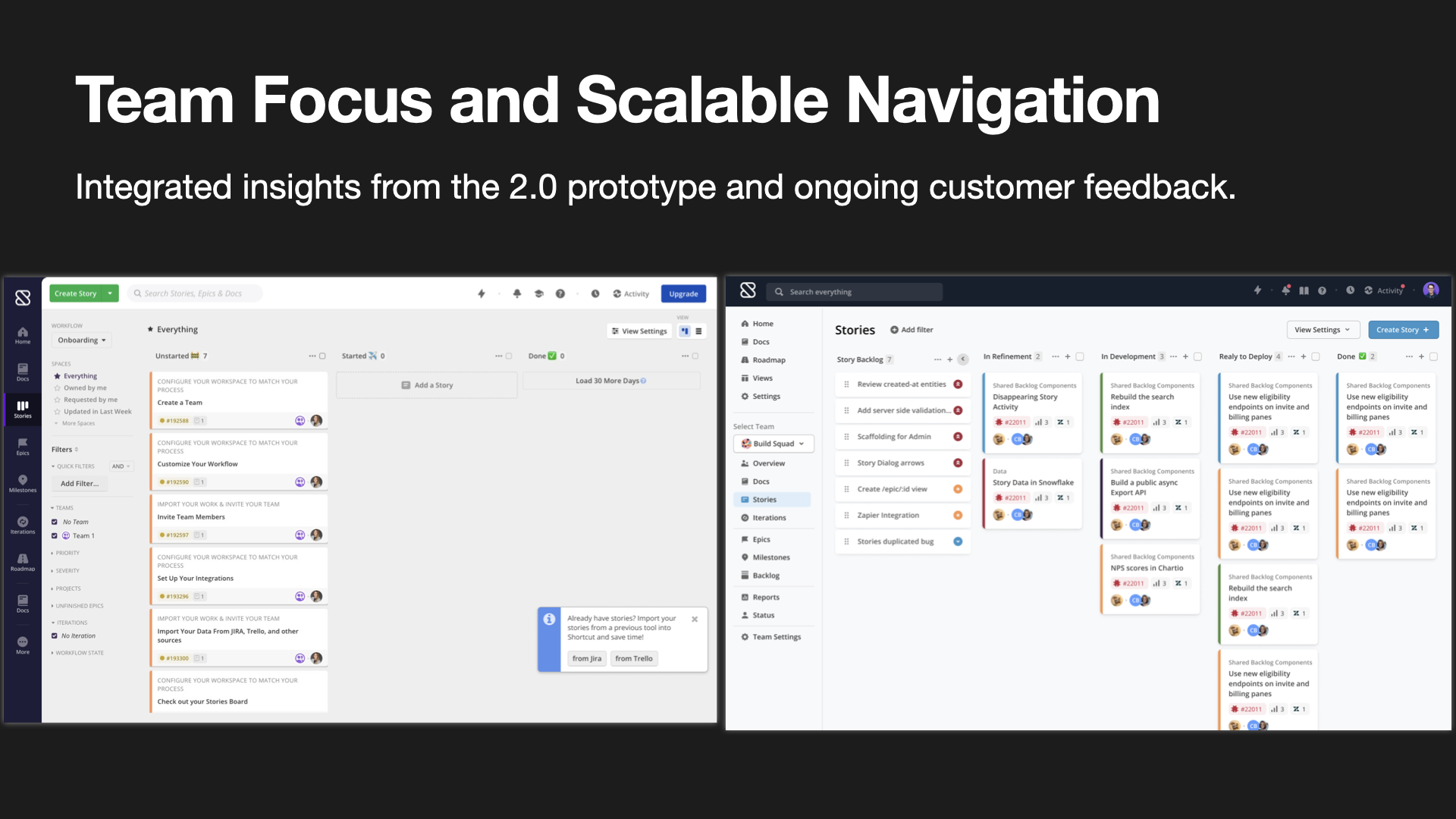

I have spent most of my career in platform environments where the surface area is wide, the user consequences are real, and design has to work across both product decisions and team operating systems. At Pivotal Cloud Foundry, GitLab, Shortcut, and Nexla, the throughline was not one domain. It was one job: make complicated systems easier to navigate, then make the teams behind those systems better at shipping coherent decisions.

That is why my work tends to sit at the intersection of workflow design, systems thinking, and design leadership. I am most useful when the product is complex, the stakes are high, and the team needs both clearer UX and a stronger way of working.

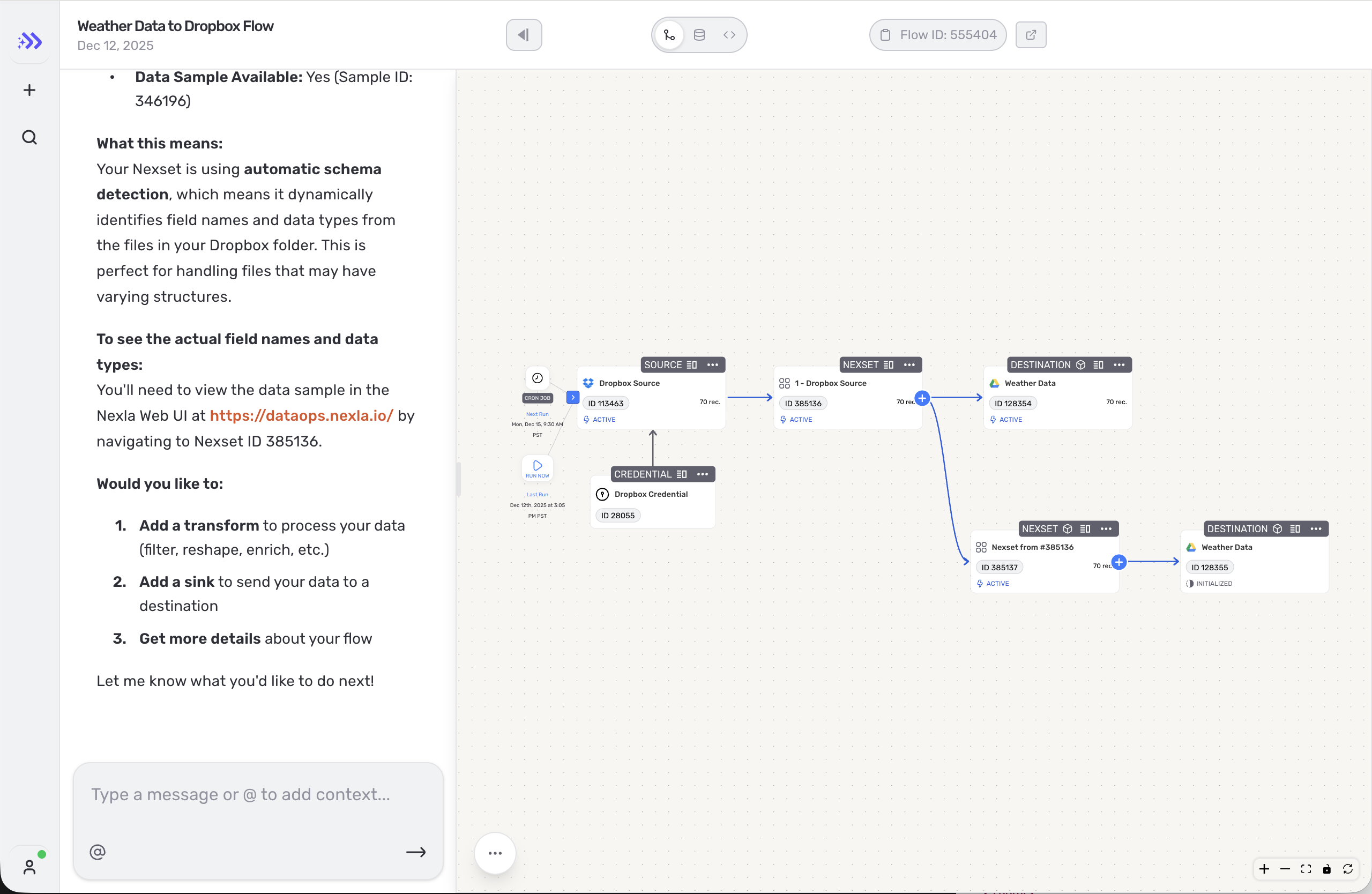

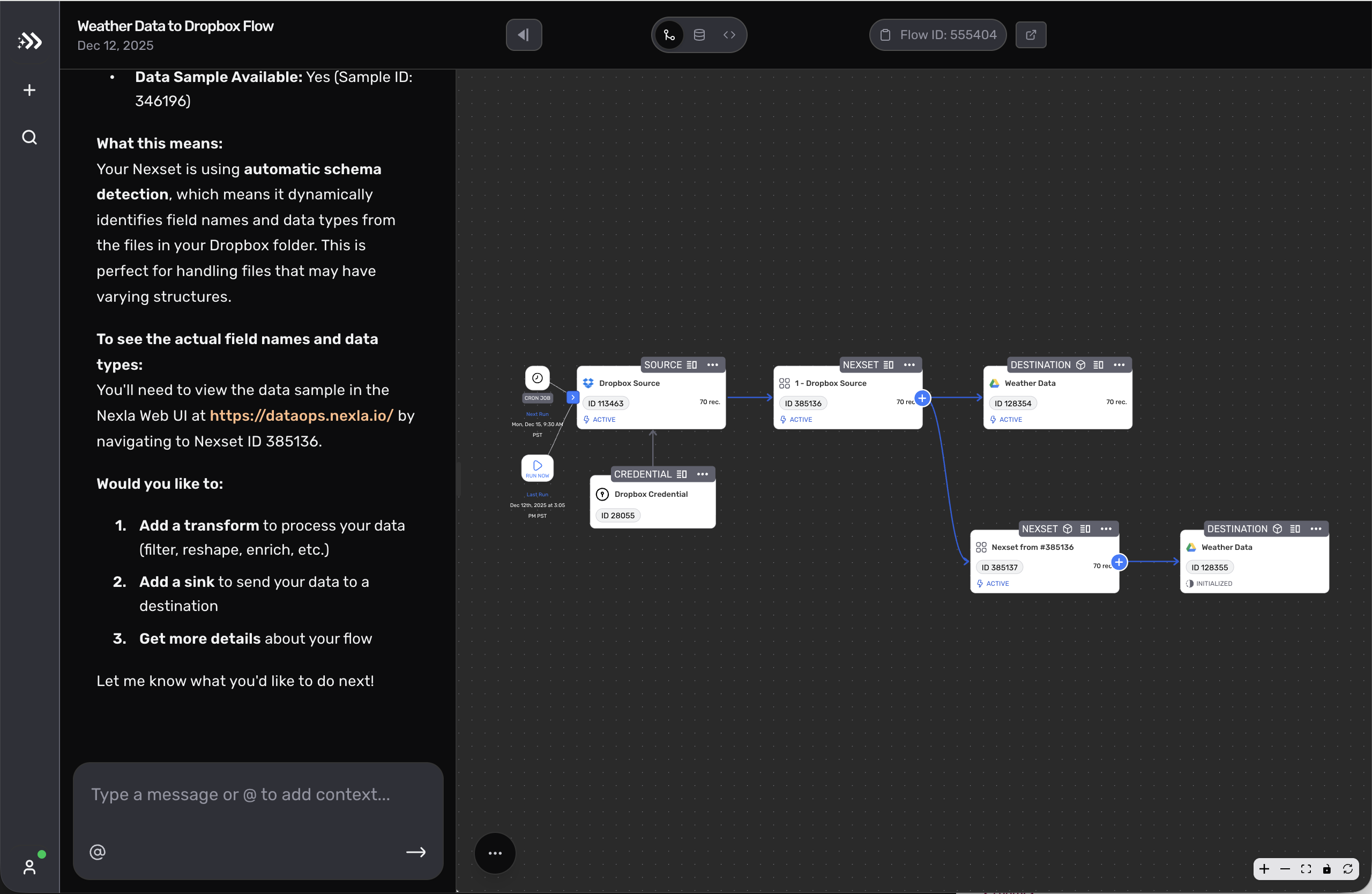

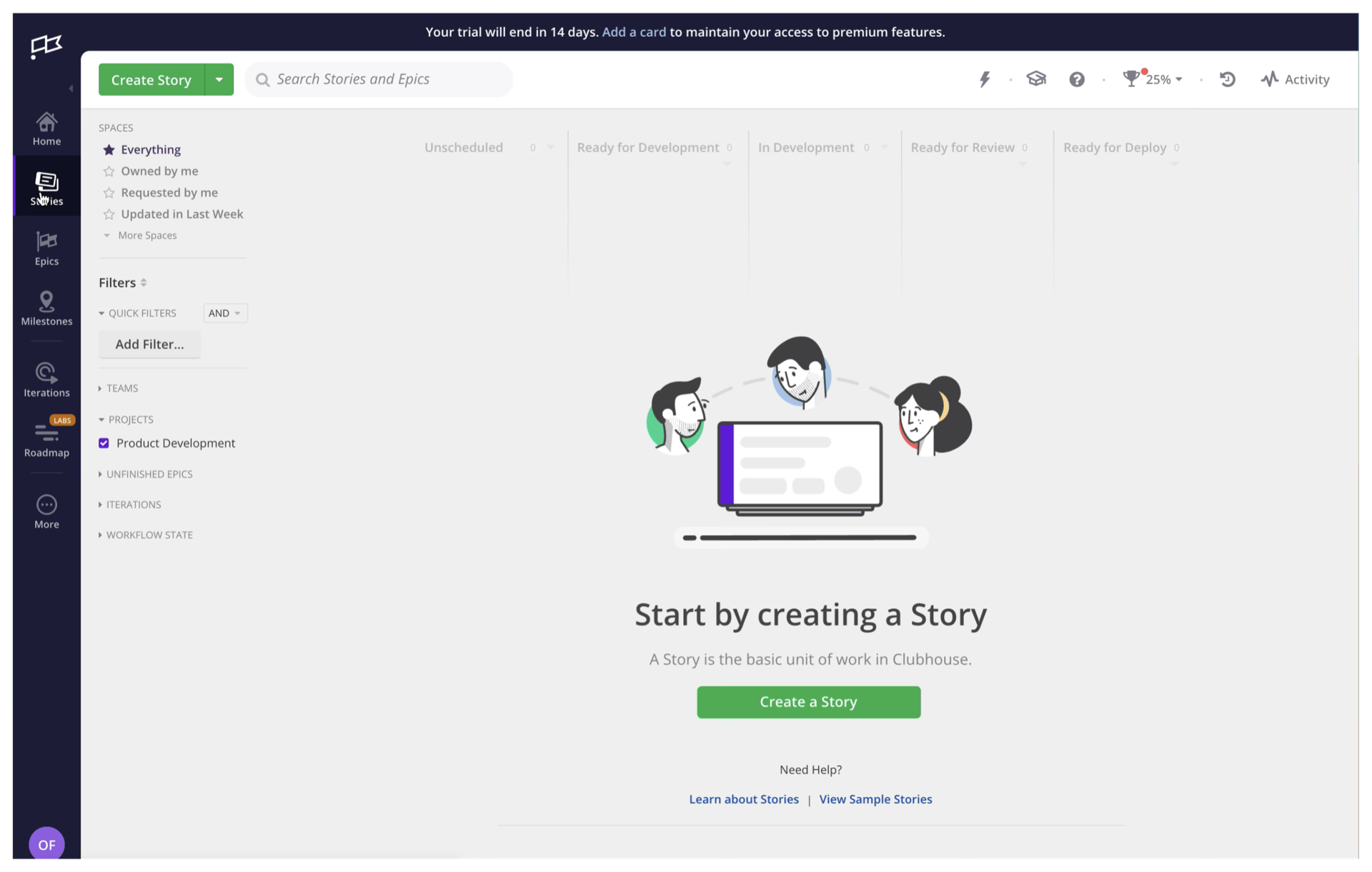

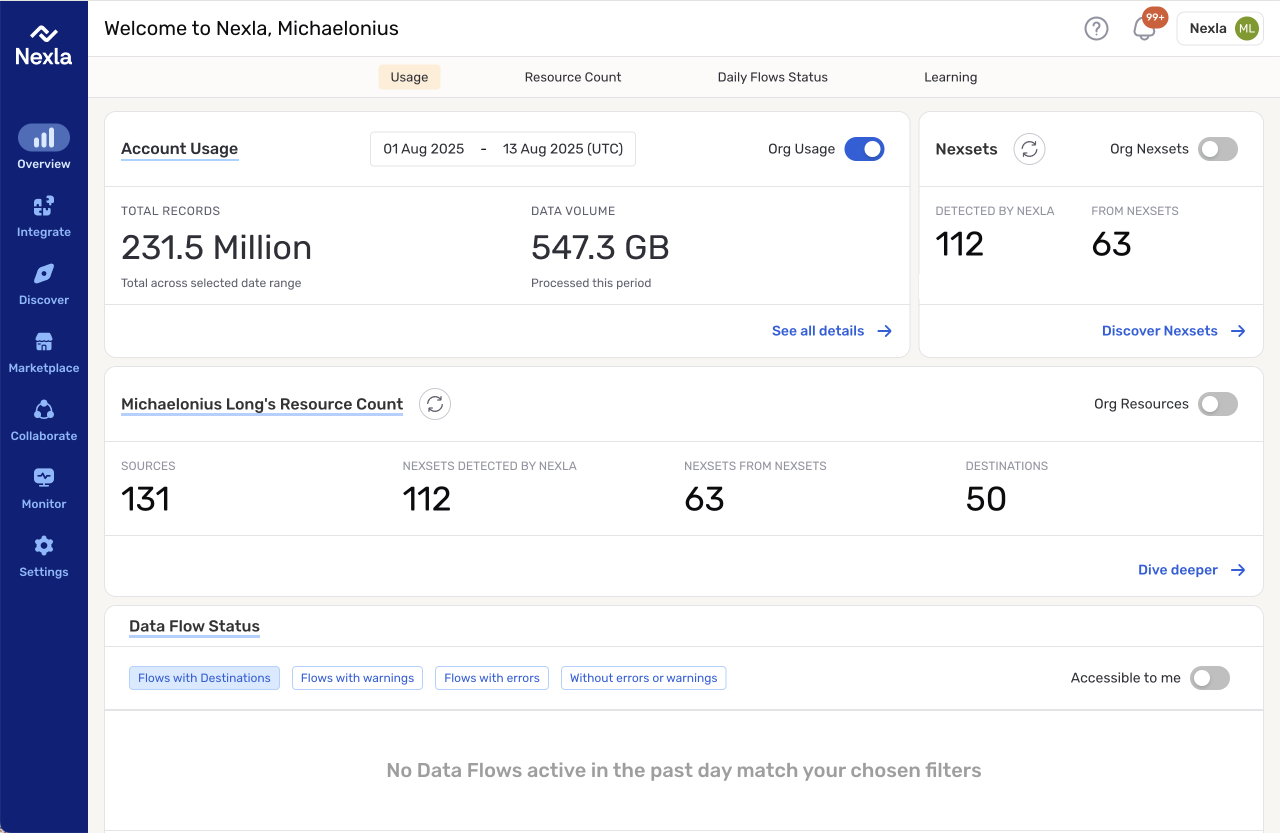

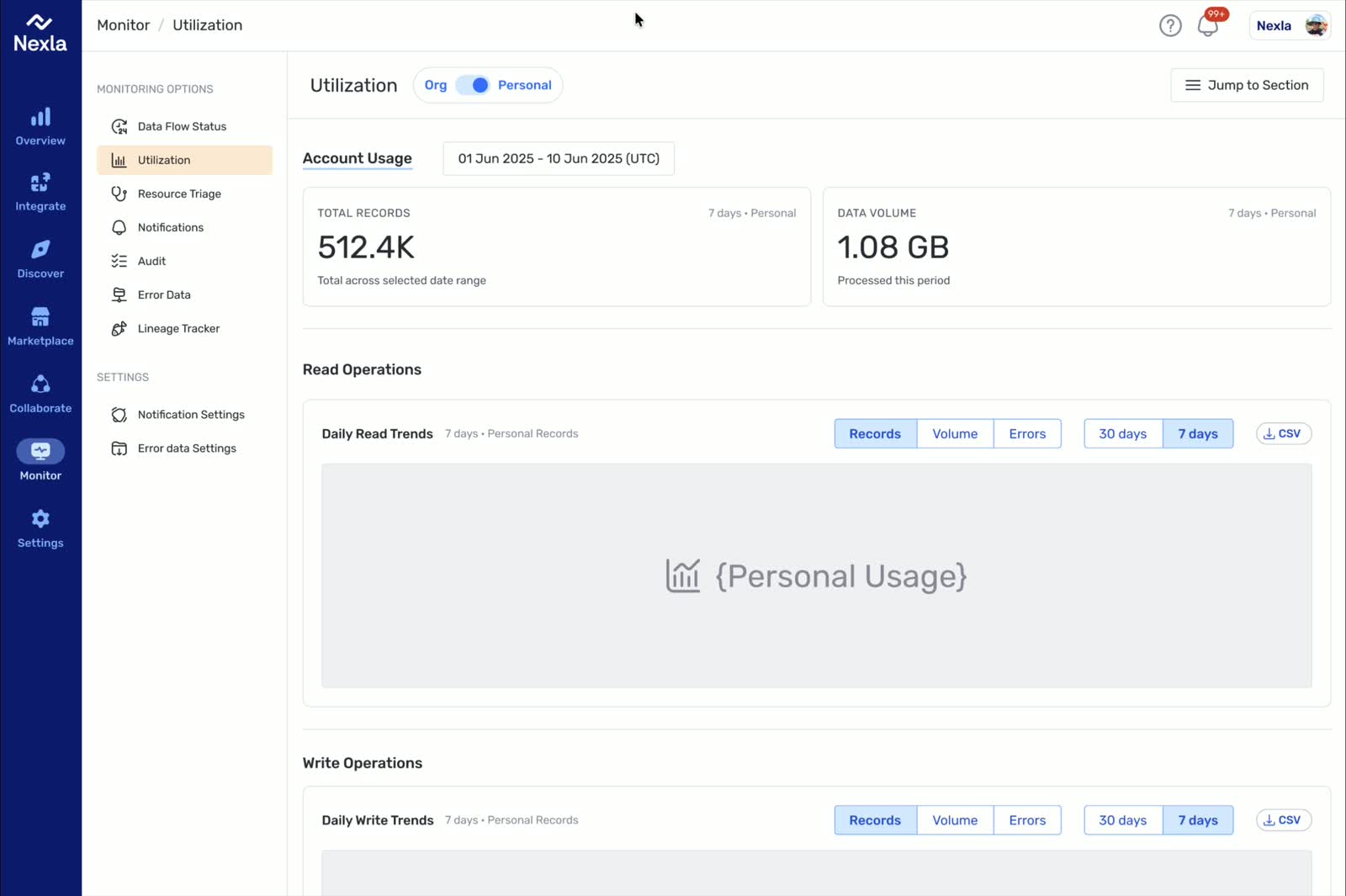

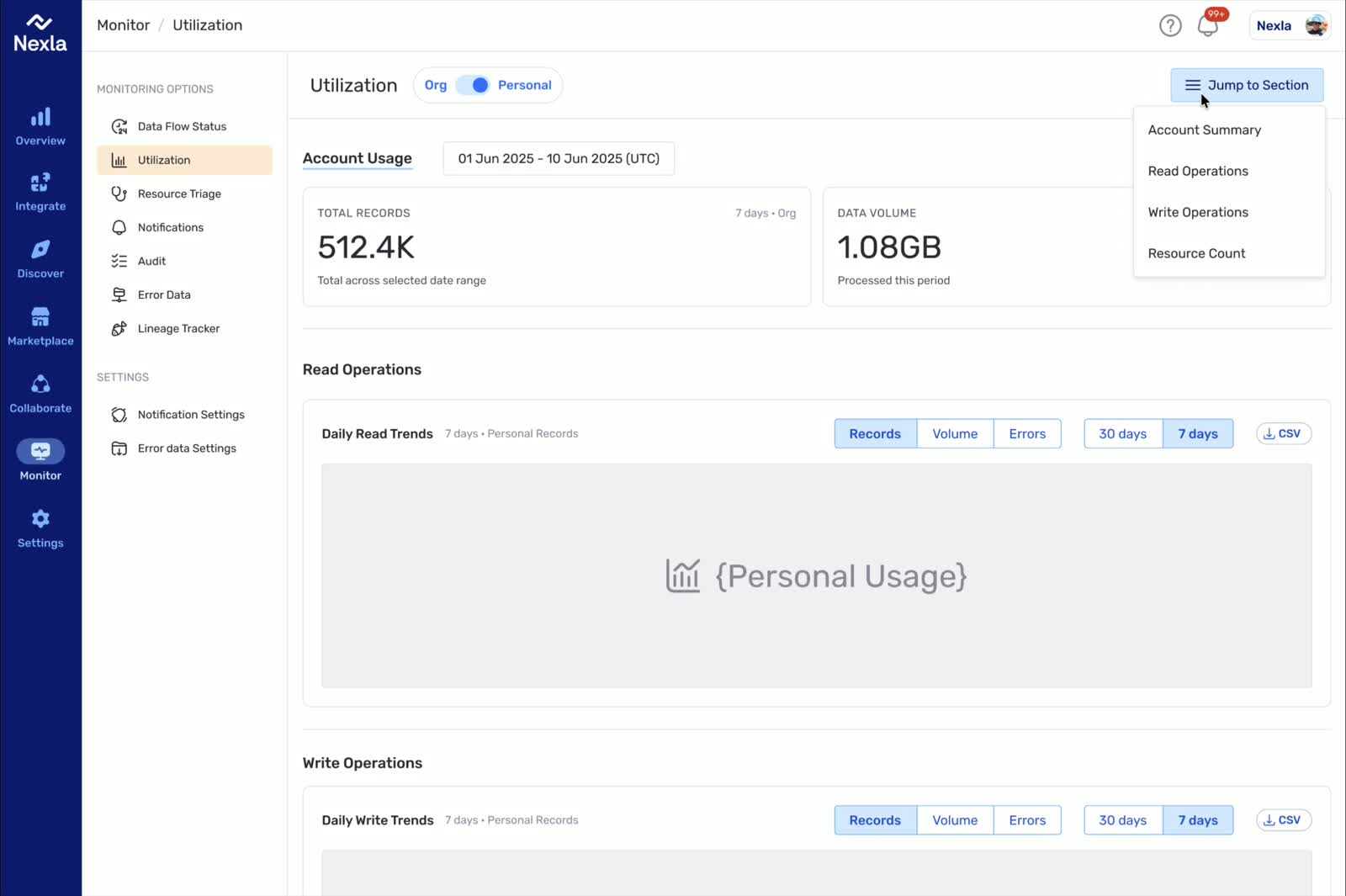

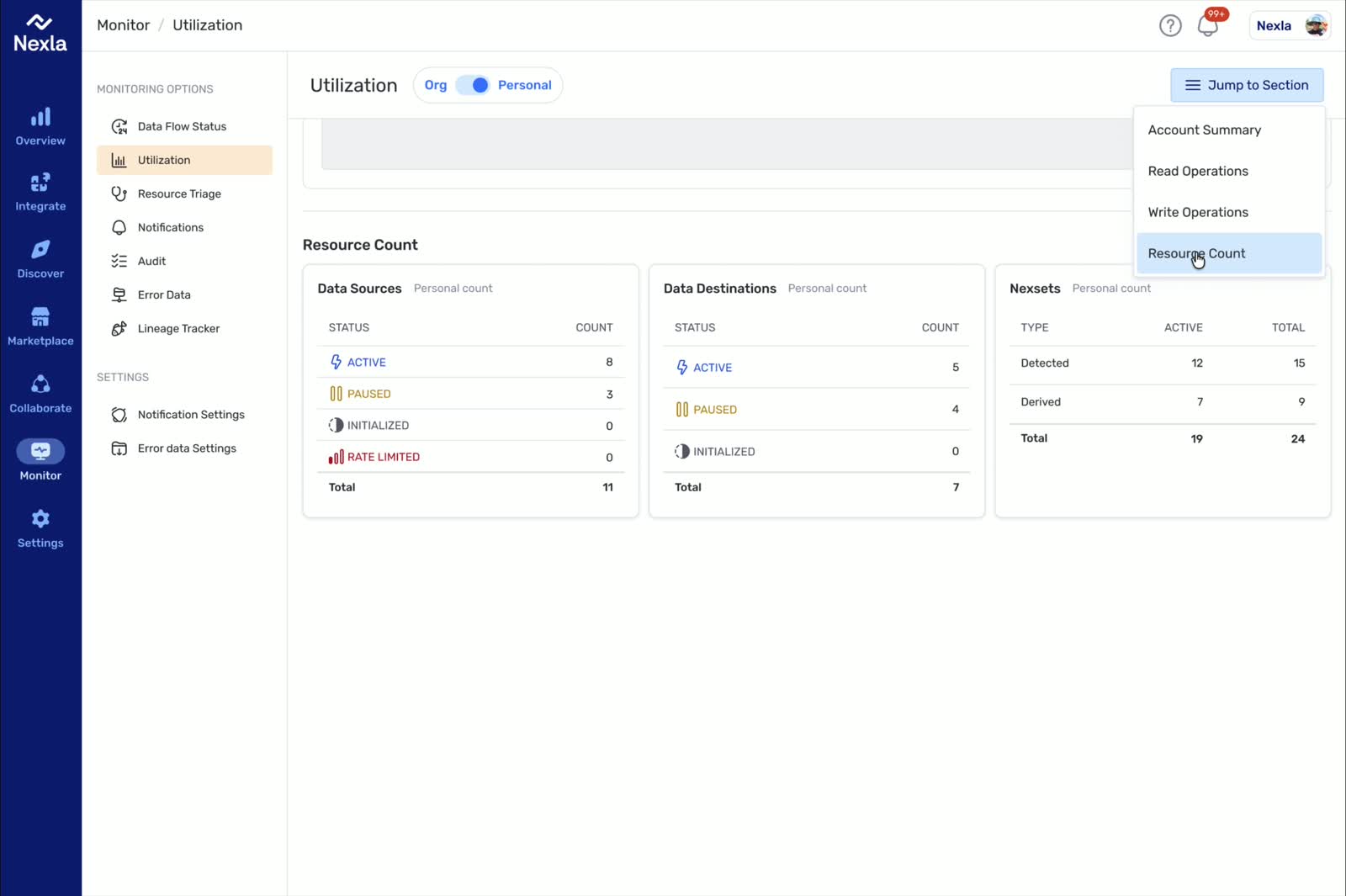

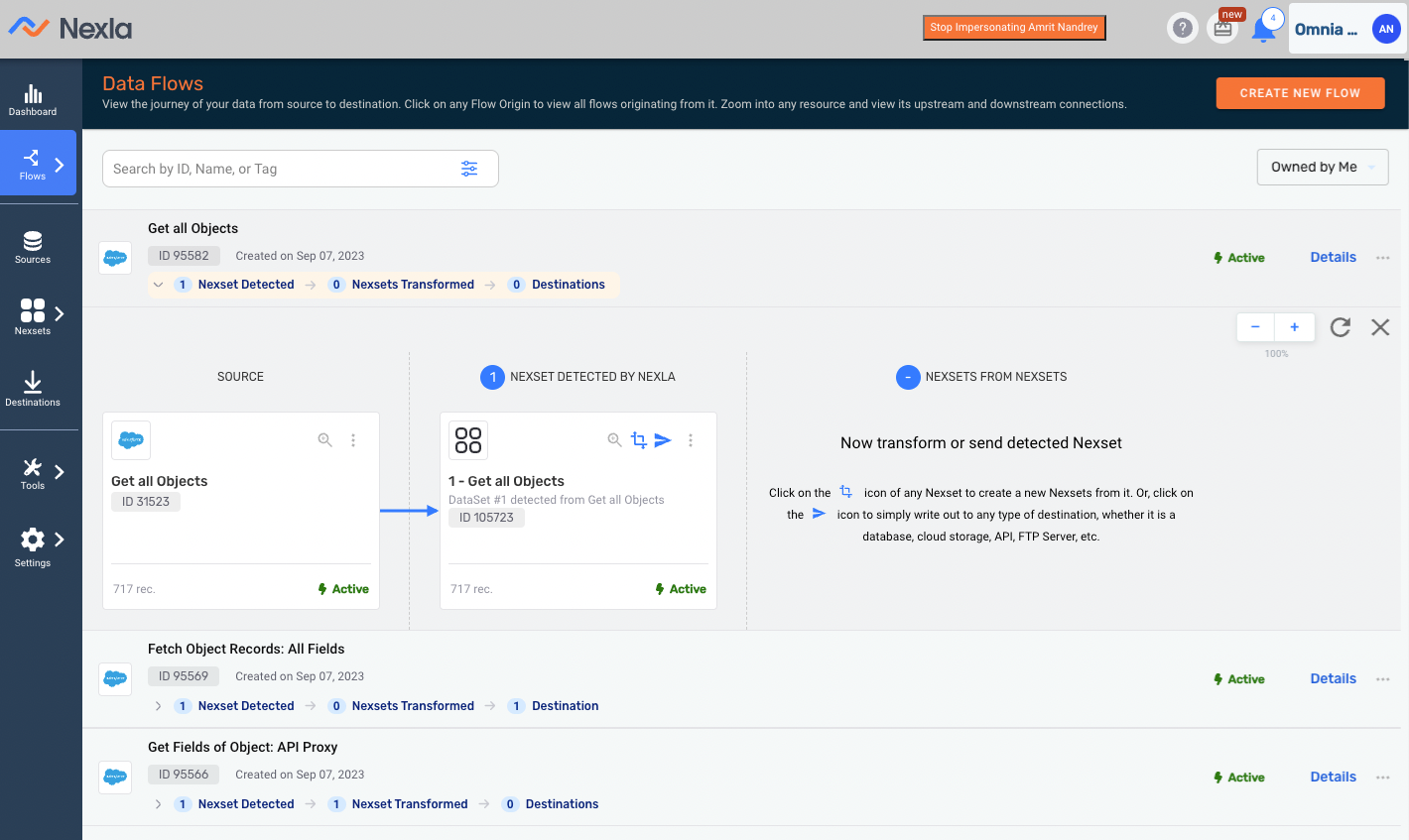

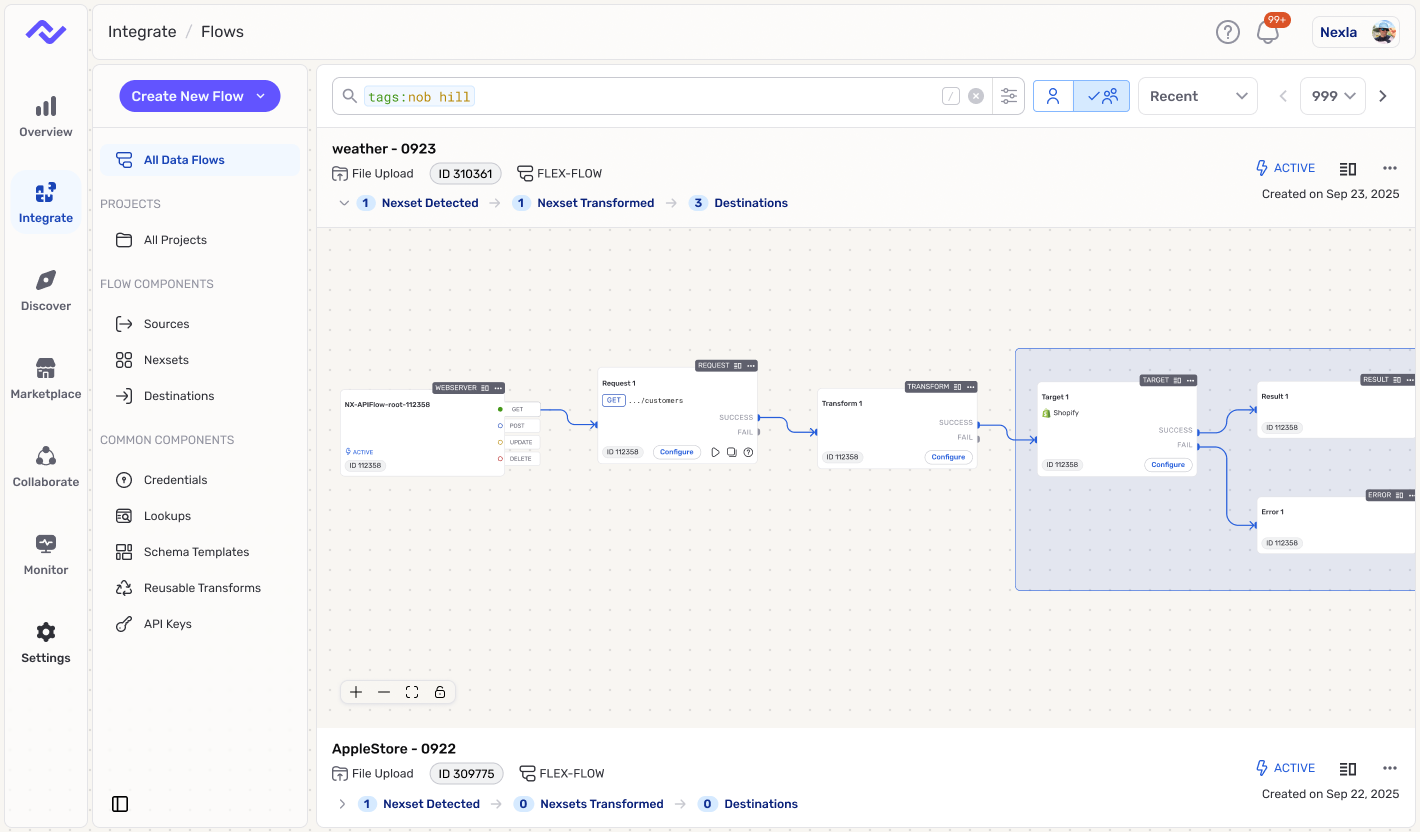

Nexla: Workflows Where Trust Matters

At Nexla, I was the first full-time designer and partnered directly with the CEO and product leadership across more than 50 surfaces. The core challenge was not just interface polish. It was turning connector-heavy workflows into something users could actually review, trust, and recover from when the system hit real-world complexity.

That work included a new 0->1 product, improvements to the core platform, and repeated decisions about how much automation to introduce without hiding the state users needed to see. The result was a clearer workflow model and a stronger design point of view for where AI belonged in the product.

AI Inside the Workflow (Not a Side Chatbot)

The AI pattern I trust most is assistance embedded in the workflow itself. The system can propose a mapping, transform, or next action, but the user still needs to understand what changed, preview the effect, and intervene before anything irreversible happens. In practice, that means propose -> preview -> apply, with guardrails and review points built into the product instead of pushed to the margins.

That distinction matters in enterprise and system-of-record contexts. When mistakes carry real cost, "helpful" is not enough. The workflow has to remain legible, governable, and observable, and the surrounding patterns have to be consistent enough for teams to ship them reliably.

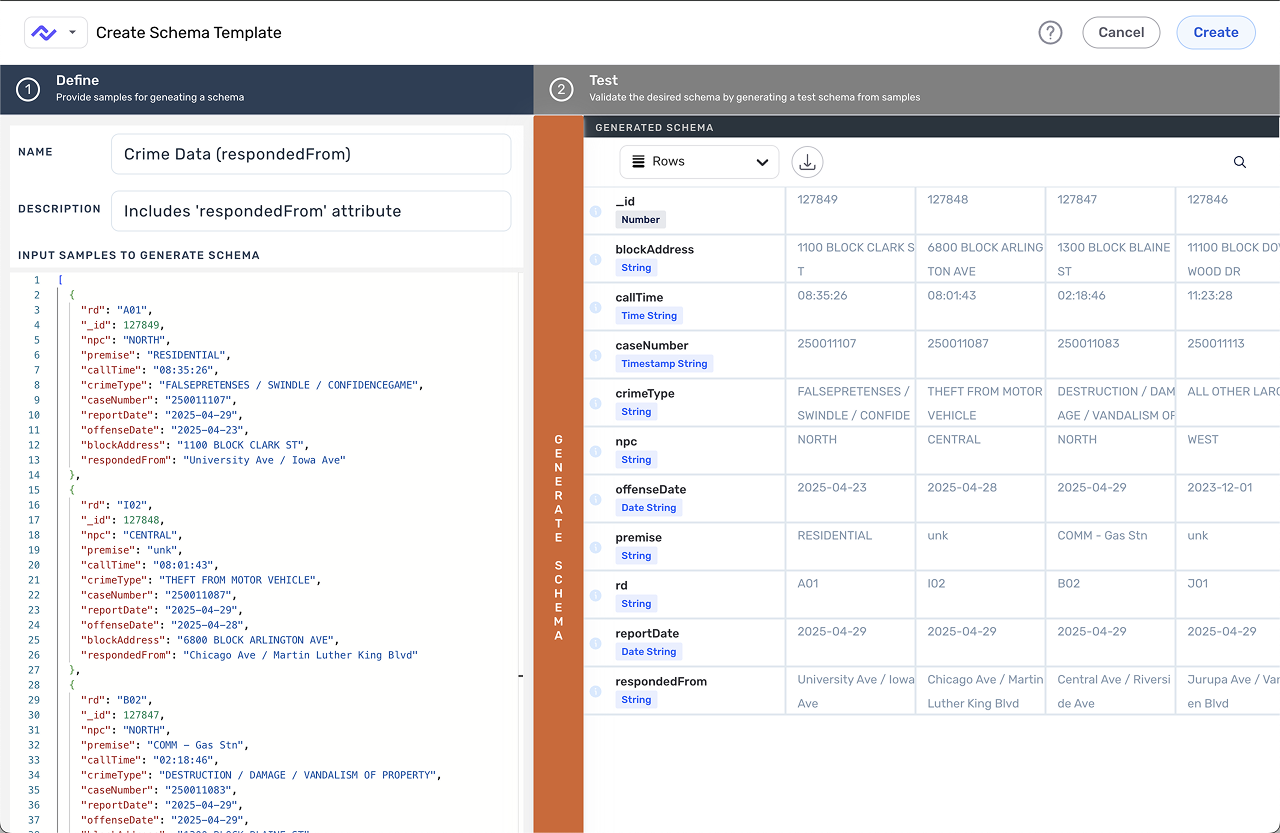

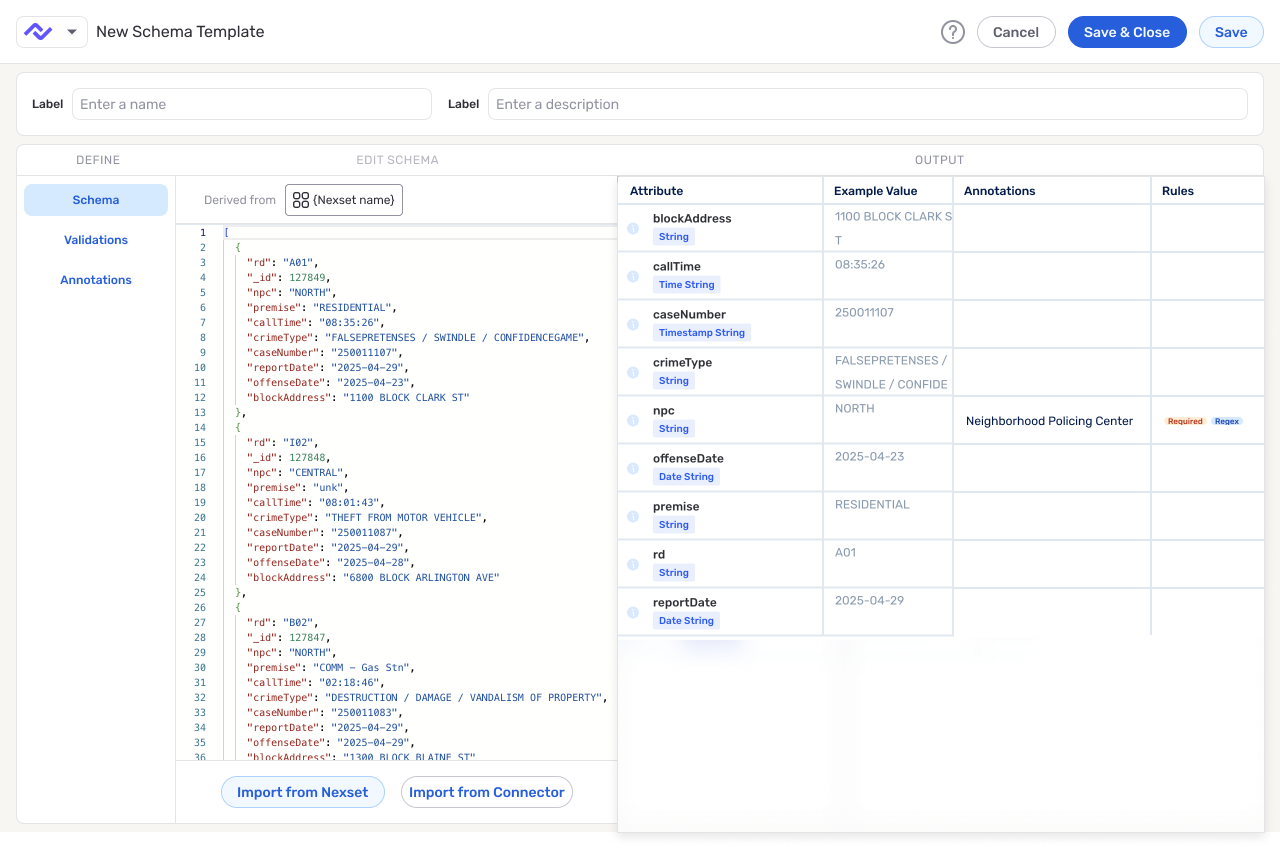

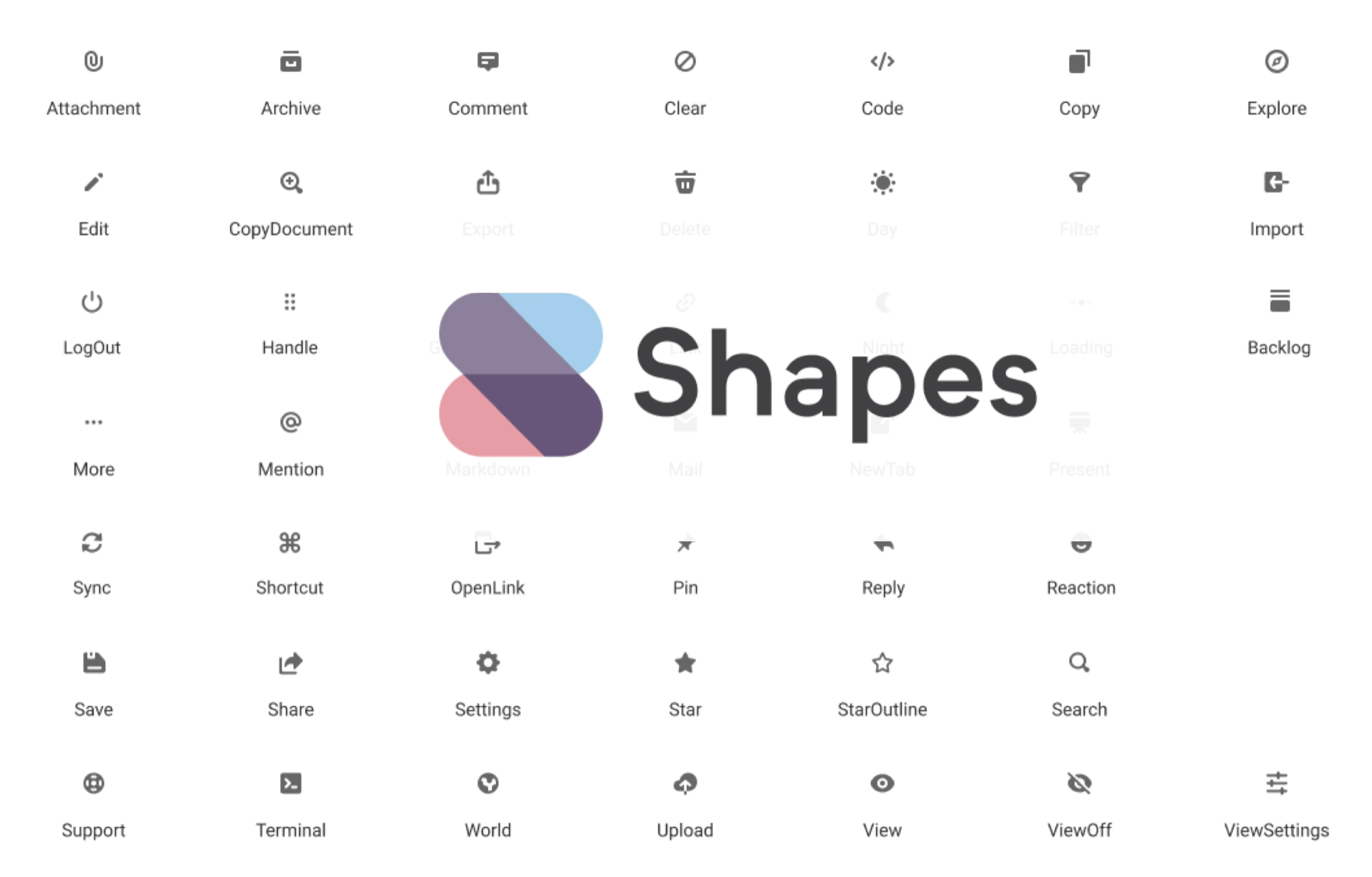

One Shared Platform Experience Across Two Products

As the product footprint expanded, the design problem expanded with it. I helped unify the core platform and Express.dev so they felt like one company had built them, not two adjacent tools with different standards. That meant defining tokens, components, and workflow patterns for connectors, schema, review states, and execution feedback, then reinforcing them through prototypes, implementation reviews, and shipped changes.

The operating rhythm was part of the design work. Design, code, PR review, feedback, and iteration all had to support the same product language if the experience was going to scale.

Why This Role

The kind of work I am most drawn to sits at the intersection of data complexity, workflow trust, and product systems. I am strongest in environments where advanced capability has to feel legible to users and coherent across surfaces, because that is where design can reduce real risk while improving speed.

That is also why this portfolio centers on trusted workflows rather than isolated screens. The throughline across the cases is consistent: make difficult actions easier to understand, create patterns teams can ship repeatedly, and keep AI assistance grounded in reviewable behavior instead of novelty.

Case Map

Two Proof Cases Behind the Narrative

The two proof cases below show the same leadership pattern from different angles. Express.dev is about AI assistance inside a live workflow: acceleration only works when users can still understand system state, trust the handoff, and recover cleanly. Schema Template Designer shows the same discipline applied to dense product complexity: define a stronger mental model, reduce ambiguity, and turn a partially formed feature into a workflow teams can actually ship.

Together, they show the balance I care about most in platform work: advanced capability without mystery, and better product judgment without slowing delivery.